Micron introduces dense 256GB LPDDR5x module aimed squarely at AI servers

techradar.comLarge language models (LLMs) and modern inference pipelines increasingly demand enormous memory pools, forcing hardware vendors to rethink server memory architecture.

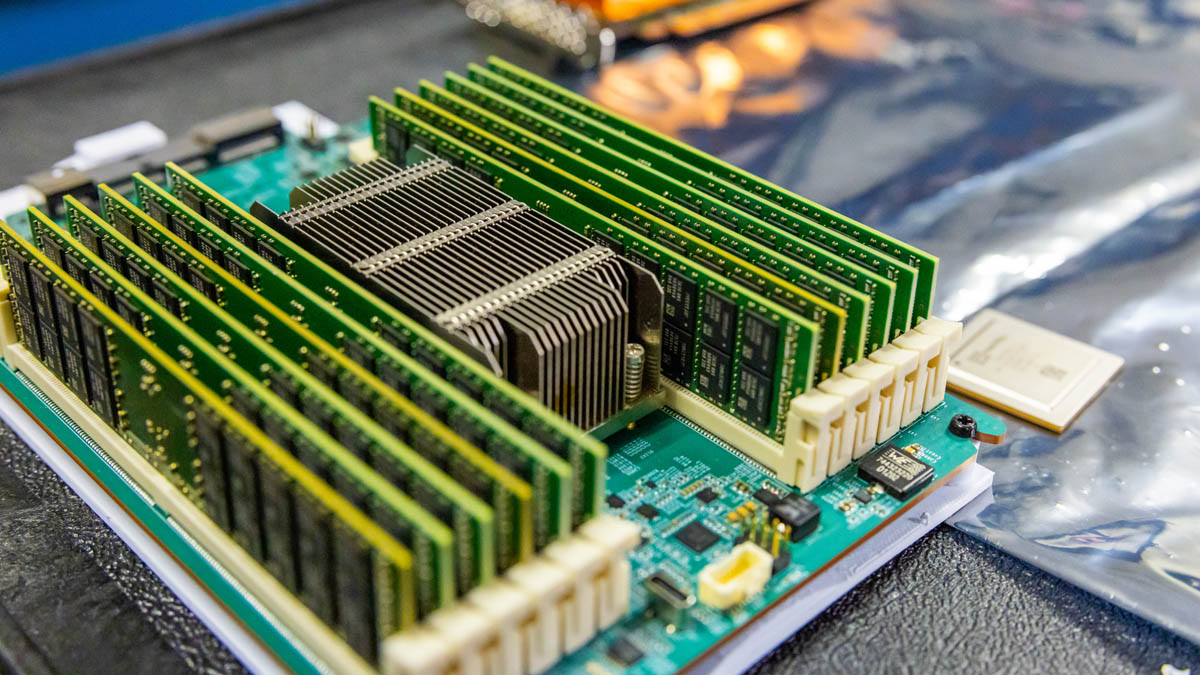

Micron has now introduced a 256GB SOCAMM2 memory module intended for data center systems where capacity, bandwidth, and power efficiency all influence overall performance.

The module relies on 64 monolithic 32GB LPDDR5x chips, forming a dense LPDRAM package that addresses the growing memory footprint required by contemporary AI workloads.

Article continues below

Copyright of this story solely belongs to techradar.com . To see the full text click HERE